Google and OpenAI's Last Chance to Beat Anthropic

Deeper Thinking vs. Smarter Geometry

Google and OpenAI just made their last call. You will not hear that in a press conference, obviously. But read the architecture notes — here and here — behind their latest LLMs and the message is right there.

And guess what. They are still betting on the same trick they have been betting on for two years. You have probably already heard the names: inference-time compute scaling — chain-of-thought, tree search, reward-model verification.

That is the machinery behind every modern reasoning model — from o3 to GPT-5.4 Thinking to Gemini 3.1 Pro. The sales pitch sounds logical, even scientific, at first glance: if reasoning is hard, let the model think longer.

If that is not enough, let it explore more branches. If that still is not enough, make it generate more intermediate tokens, score more candidate answers, verify more paths, and keep burning compute until the right answer finally appears.

That is where the quarter trillion goes.

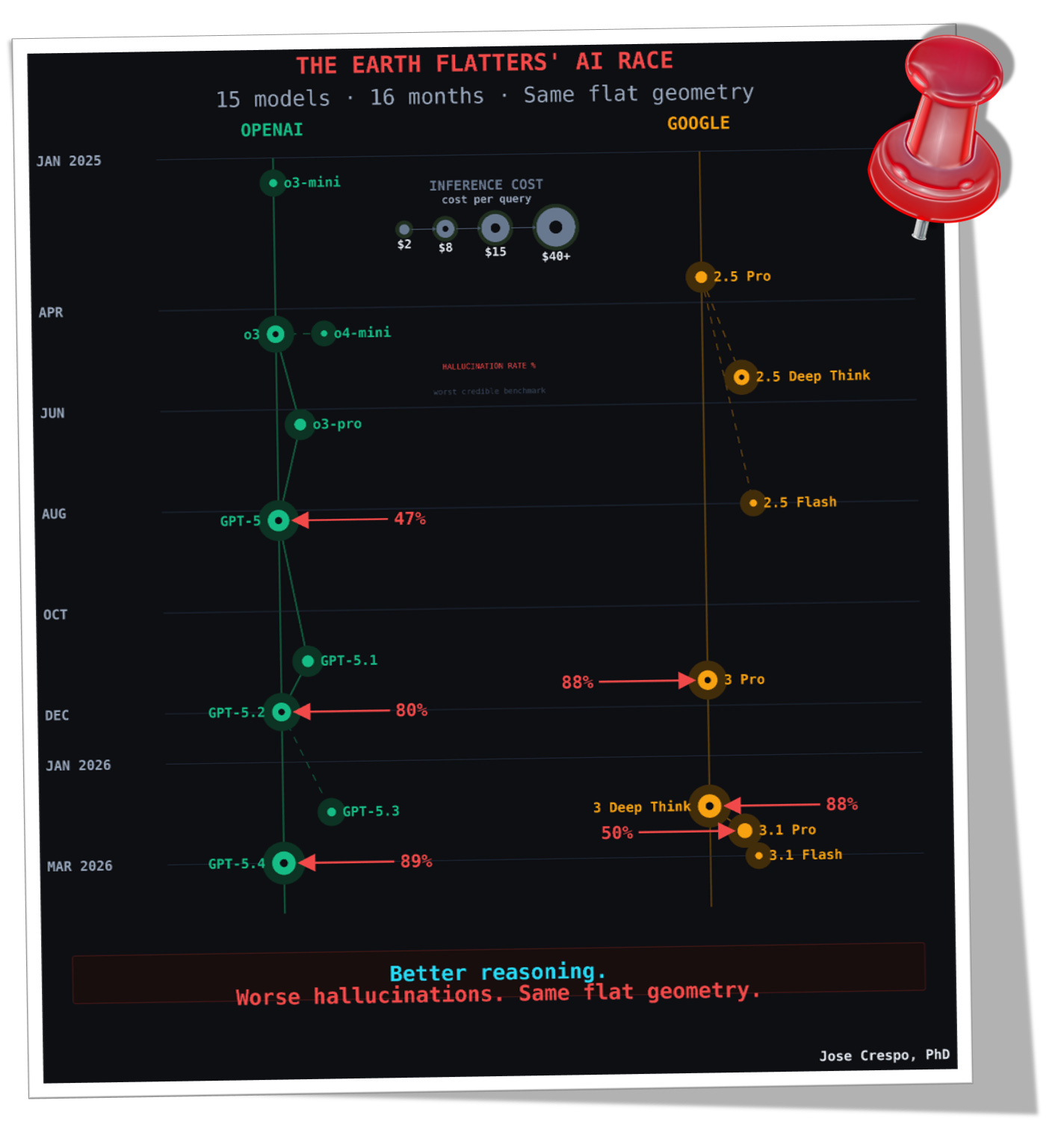

15 models in 16 months is proof enough that something is rotten, and the smell is not coming from Denmark. 🙄

Look at the chart below: green for OpenAI, gold for Google. Each launch comes wrapped in marketing rhetoric. Each model is sold as a leap, yet under the hood it does the same thing as the last: thinking longer, searching harder, hallucinating more, and burning more money at inference time

Repeating the Same AI Seasons — and the Same AI Winters

The cracks are already visible. But to see them clearly, you need the wider frame… because without it, the present moment looks far more original than it actually is.

What the industry now calls inference-time compute scaling — chain-of-thought, tree search, reward verification — is a bundle of ideas from the 1940s and 1950s, repackaged under cleaner labels and sold back to you as a revolution.

The trick has worked before. More than once.

The two animations below compress roughly eighty years of AI history into a single recurring cycle. Once you see the rhythm, you will not be able to unsee it. AI does not advance in a straight line. It moves by seasons:

Breakthrough.

Explosion.

Limits exposed.

AI Winter.

Then the cycle begins again.

Watch the pattern unfold: from McCulloch and Pitts’ binary neuron in 1943, through the perceptron boom and the first AI winter, to the return of backpropagation, the second AI winter, and finally the deep-learning explosion that seemed — for a while — to have broken the loop for good.

But, infortunately It hadn’t

Now zoom into the moment that fooled everyone.

In 1969, Minsky and Papert proved that a single-layer perceptron could not solve even a basic XOR problem — one straight line cannot separate what needs separating. That critique helped trigger the first AI winter.

Then in 1986, Rumelhart, Hinton, and Williams showed the escape route: add a hidden layer, make it trainable through backpropagation, and the network can combine several straight cuts into a non-linear decision boundary.

The XOR problem was solved. The field celebrated. But here is the part nobody talked about at the celebration: backpropagation did not bend the space. It just learned to make more cuts inside the same flat geometry. The geometry never changed. Only the bill did.

As more units and layers are added, the network partitions the space into an increasing number of regions. Minsky might have seen it as using a cannon to kill a fly, but now the perceptron was back on steroids.

Now watch the animation… you can see it clearly: backpropagation escapes the perceptron’s old XOR trap not by bending the geometry, but by learning enough straight cuts

The XOR problem was solved. But the cycle did not end there.

This is where the free ride ends. Everything above was only the runway. Below is where the real argument begins — with animations, diagrams, and peer-reviewed mathematics showing why Google and OpenAI’s reasoning push still runs on old search-era machinery, why models often hallucinate more as inference grows longer, and why Anthropic’s own technical trail keeps circling the same conclusion: the geometry underneath today’s AI is fundamentally wrong.

Subscribe now. No marketing BS. Just the math, the architecture, and the failure analysis — before everyone else catches on.