Why AI Drones Fail in the Real World - And the Math That Could Fix Them

From hills and winter to GPS spoofing and moving targets, drone failures expose the limits of flat AI and point to a geometry-first solution.

Time to judge current AI on its own merits, facing the real world, which is not a tidy chatbot window, not something you can summarize into a benchmark, and least of all a controlled demo. We know that critical situations in our familiar world can turn it into a brutal place where conditions shift faster than any frozen AI model can match.

And there you have the perfect example: our familiar drones, and the dream of turning them into a machine fused with AI that handles everything from civilian logistics to military strikes. What other example would show us the supposedly limitless capabilities of AI in the real world better than this one?

So yeah, we are right to expect that if real AI were already available, it would have been implemented in these machines first, especially the precision military hunters. They should look almost extraterrestrial in capability, executing operations no human-controlled device could match, above all those synchronized maneuvers of dozens of drones moving through real landscapes under real pressure, in real time.

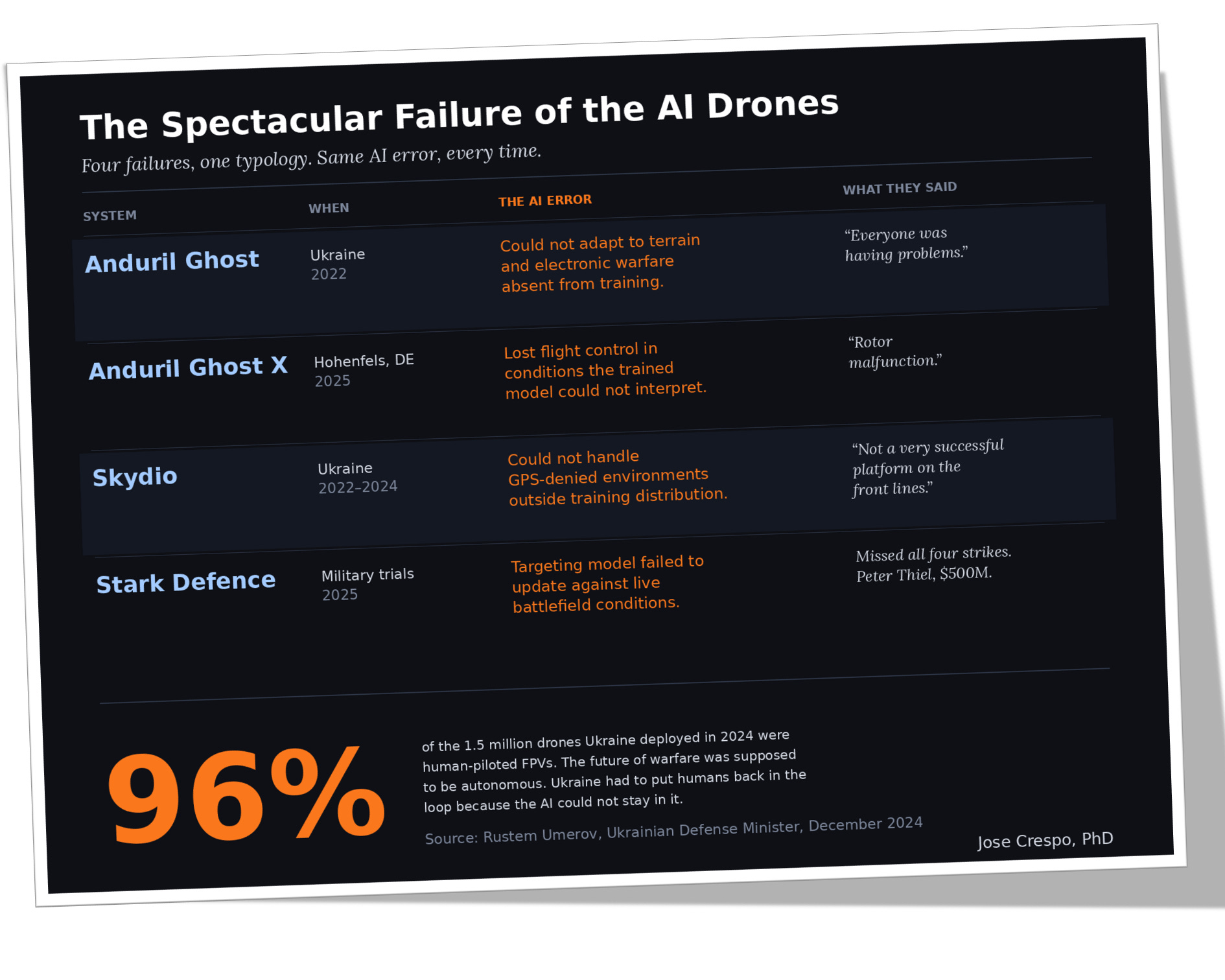

Now wake up to 2026. And to the years that follow, if we do not change the flat AI paradigm, and what you get? The miserable “state of the art” showing in the table below.

You see. Many billions of dollars spent on machines that cannot tell a hill from a valley, hold altitude when the temperature drops, or, my favorite, the drone cannot hit a moving target the way any twelve-year-old does on a PlayStation, by aiming at where the enemy will be, not where it is.

And what has been the industry response to those blunders? The usual polished shrug, a press release for every incident, blaming the rotor, the weather, the test conditions, anything except that all those failures come from the same broken mathematics running inside the drone-brain.

The Four Ways AI Hits the Real World Wall

Before we unravel the possible solution in the next section, let’s look at the failures themselves, not as accidents but as archetypes. Each one exposes a different face of the same architectural limitation, and together they map the ways a trained model collapses the moment the world stops matching the training set. Watch them carefully. The solution comes later; I am sure you can already come up with some possibilities while examining these simplified animations.

First Type: The Map Is Not the Territory

The training data of course contained hills and terrain irregularities. But the battlefield in Ukraine was more nuanced than the training set anticipated. The drone flew at the altitude its model had learned was safe, straight into a particular hill the model had never identified as such, because the architecture had no mechanism to notice that the world in front of it no longer matched the world it had been trained on.

You have never seen AI explained like this.

Behind the paywall, you will go from cinematic drone failures to the mathematics beneath them: flat metrics, Fisher geometry, and a multilayer AI architecture built for the real world. It is deep, visual, cinematic, and packed with mind-blowing graphics and practical ideas you need if you want to stay ahead in AI.